Maria Escobar, Juanita Puentes, Cristhian Forigua, Jordi Pont-Tuset, Kevis-Kokitsi Maninis, Pablo Arbelaez

WACV (2025)

Abstract

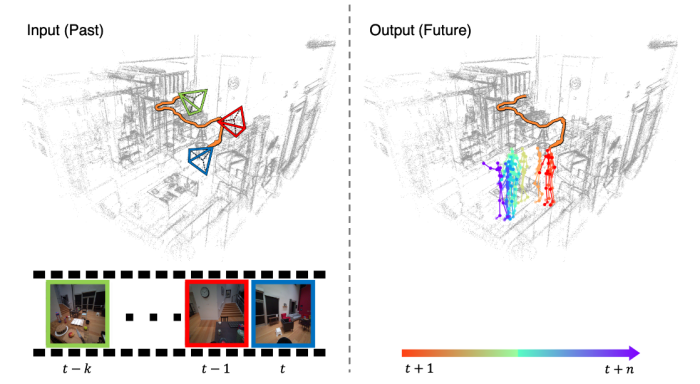

Accurately estimating and forecasting human body pose

is important for enhancing the user’s sense of immersion in

Augmented Reality. Addressing this need, our paper intro-

duces EgoCast, a bimodal method for 3D human pose fore-

casting using egocentric videos and proprioceptive data. We

study the task of human pose forecasting in a realistic setting,

extending the boundaries of temporal forecasting in dynamic

scenes and building on the current framework for current

pose estimation in the wild. We introduce a current-frame

estimation module that generates pseudo-groundtruth poses

for inference, eliminating the need for past groundtruth poses

typically required by current methods during forecasting.

Our experimental results on the recent Ego-Exo4D and Aria

Digital Twin datasets validate EgoCast for real-life motion

estimation. On the Ego-Exo4D Body Pose 2024 Challenge,

our method significantly outperforms the state-of-the-art

approaches, laying the groundwork for future research in hu-

man pose estimation and forecasting in unscripted activities

with egocentric inputs