Collaboration with Gustavo Pérez, Dr. Bibiana Pinzón and Dr. Sergio Valencia from the Radiology Department, Fundación Santa Fe de Bogotá and David Cuellar, Astrazeneca

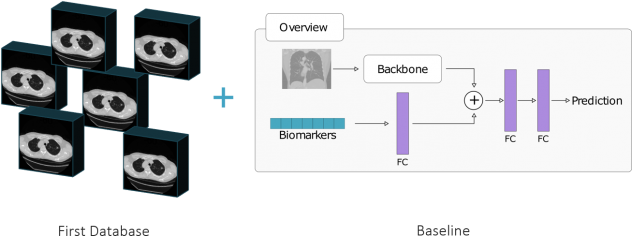

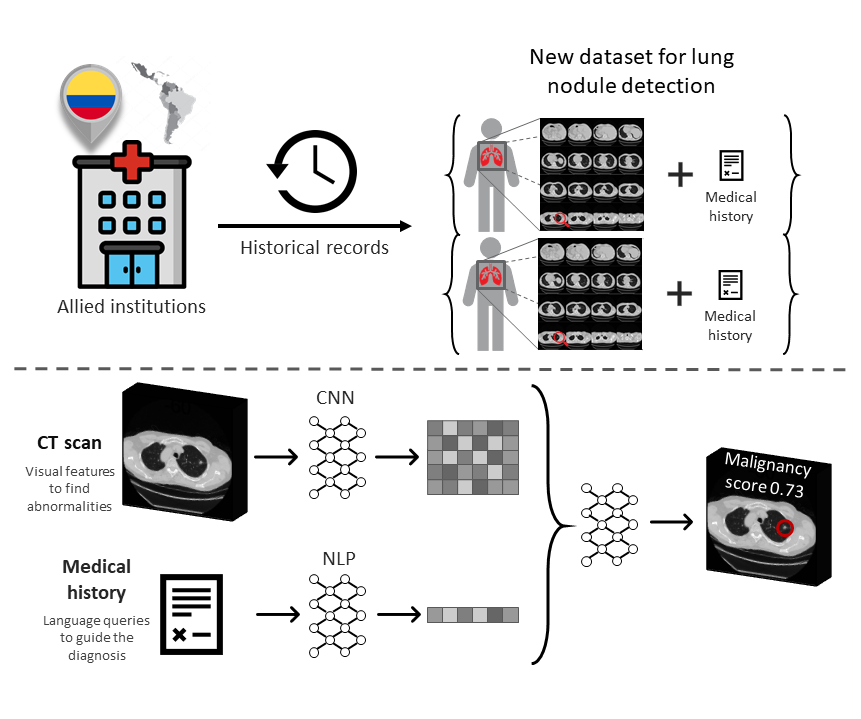

Lung cancer is the second most common type of cancer in the world. The reason for this staggering mortality rate is the near-absolute absence of apparent symptoms in patients of early lung cancer. Consequently, the vast majority of lung cancers worldwide are diagnosed in stages III and IV, when the efficacy of existing treatments and hence the chances of survival are seriously compromised. Although deep learning methods have pushed forward automated early lung cancer diagnosis in recent years, all existing datasets and challenges seek to diagnose the disease using exclusively visual data. However, specialists also take into consideration all their knowledge of the context and the patient’s medical history. In this project, we aim at creating a method that includes both visual and clinical information for early-stage lung cancer diagnosis.

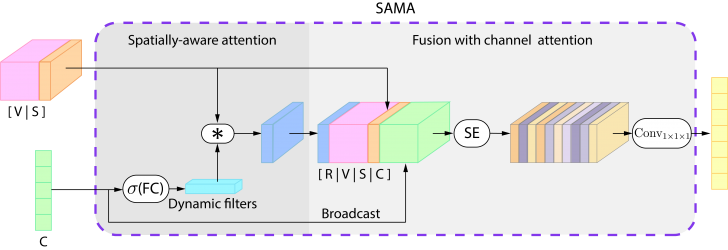

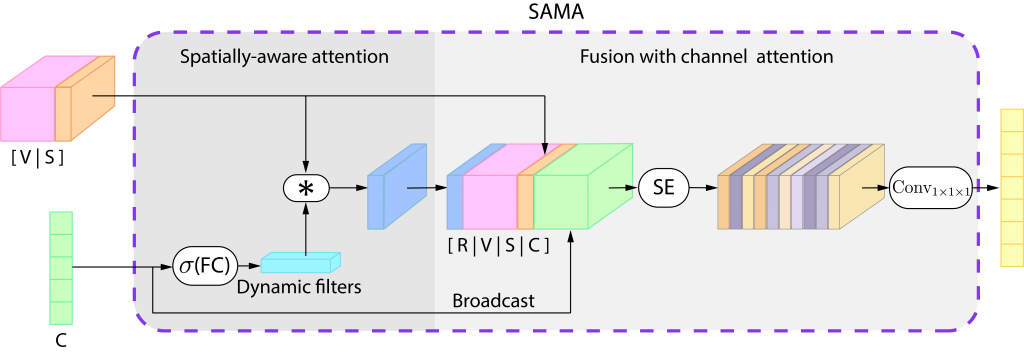

Our approach takes advantage of the spatial relationship between visual and clinical information, emulating the diagnostic process of the specialist. Specifically, we propose a multimodal fusion module composed of dynamic filtering of visual features with clinical data followed by a channel attention mechanism.

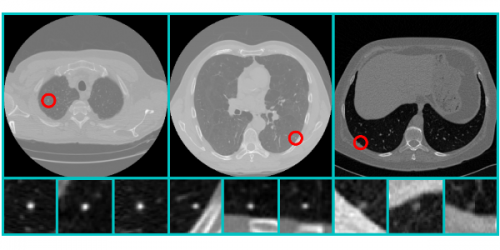

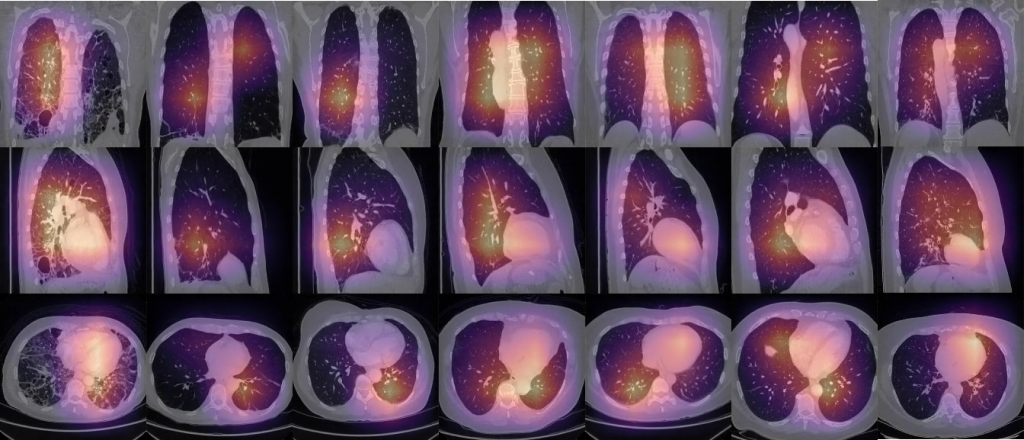

We identify the location of the pathology relating to the information given by the radiologist and the visual information. Our qualitative results show an unsupervised intermediate representation that emphasizes our method’s central idea: employing multimodal information to spatially express the clinical information given by the radiologist, enriching the final representation, and producing better predictions. To read more about this project, you can visit our web page.

Presentation Videos

Publications

Awards

GOOGLE LATIN AMERICA RESEARCH AWARD 2019

L. DAZA AND P. ARBELÁEZ

We won the Google Latin America Research Awards (LARA) in 2019 with the project “Lung Nodule Detection and Malignancy Prediction Using Multimodal Neural Networks”.

ISBI2018 CHALLENGE

G. PÉREZ AND P. ARBELÁEZ

We won the first place at the international ISBI2018 Challenge of Lung Nodule Malignancy Prediction, Based on Sequential CT Scans.